3) Examples

Here we provide tangible mockups that illustrate three examples suggested above. The first and second illustrations are designed by Katherine Mustelier and the third is made by Jane Im.

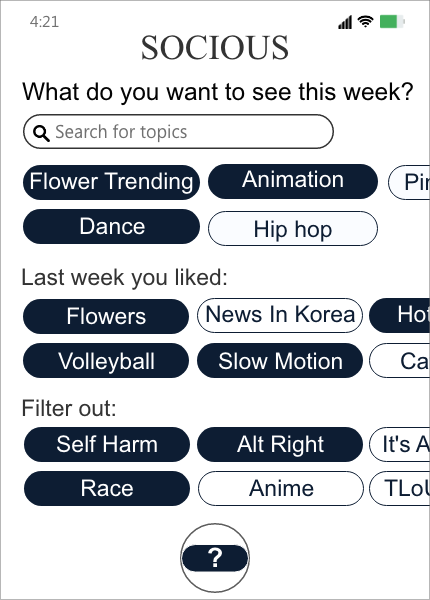

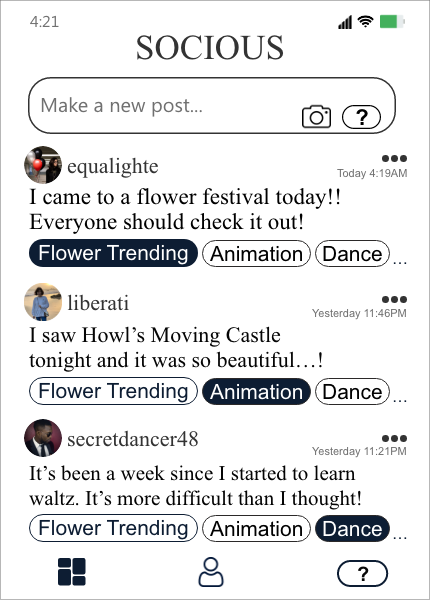

Imagine that Lucy logs onto a new platform called Socious, and the platform greets them by asking “What do you want to see this week?” Lucy sees Socious recommended keywords like “Flower Tending”, “Animation”, and “Dance” based on topic modeling. Lucy decides they would like to see more of flowers, dance, and animation. Lucy also notices they can specify topics they do not want to see. Lucy can also select among tags that include well-known triggering topics. Lucy selects “Self Harm”, “Alt Right,” and “Race” for exclusion from their feed. As Lucy scrolls down the feed, they see the new preferences immediately reflected. After a week, Socious asks Lucy again for topic preferences—though Lucy can change the frequency of requests any time.

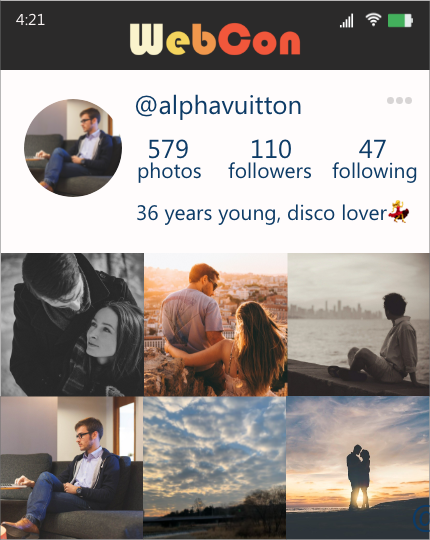

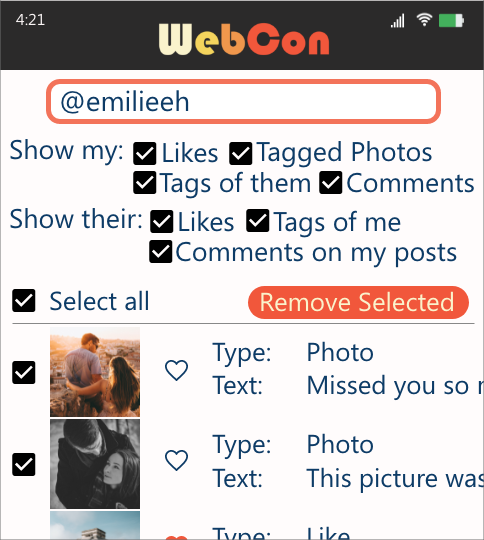

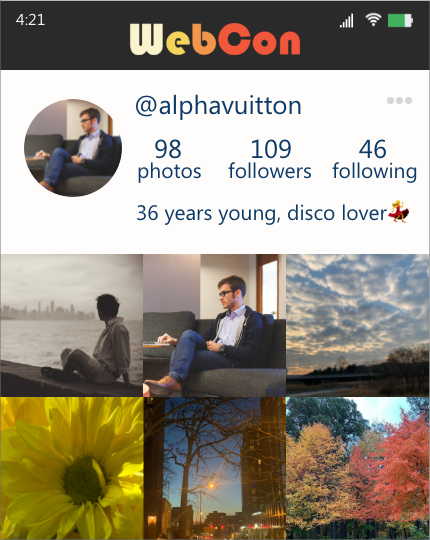

Imagine Jon logged into WebCon, a new social platform. Jon recently went through a break-up, and wants to remove all data related to his ex-partner, Emily. Jon goes to the dashboard and queries for his posts that Emily liked, is tagged in, or left comments on, as well as Emily’s posts that he liked, is tagged in, or left comments on. He decides to delete all of his posts that are related to Emily. He also chooses to remove his likes, comments, and tags in/on Emily’s posts. Jon goes back to his profile page and sees these posts removed from his profile. Jon also deletes all of Emily’s comments in his remaining posts. In contrast, Jon cannot delete Emily’s posts of Jon, as those posts are Emily’s.

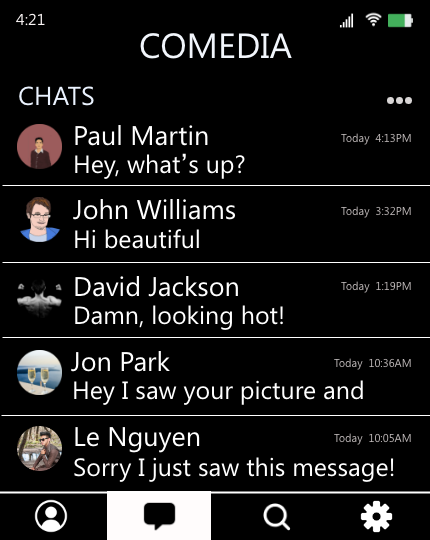

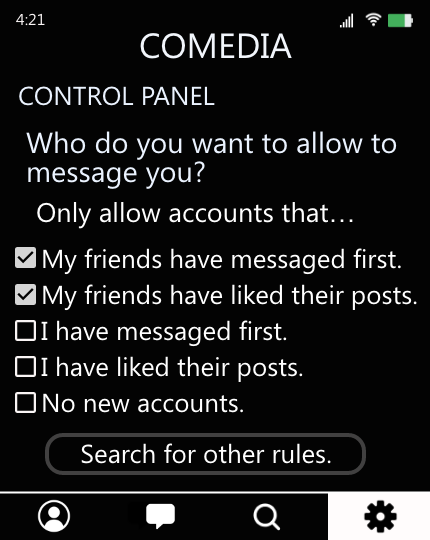

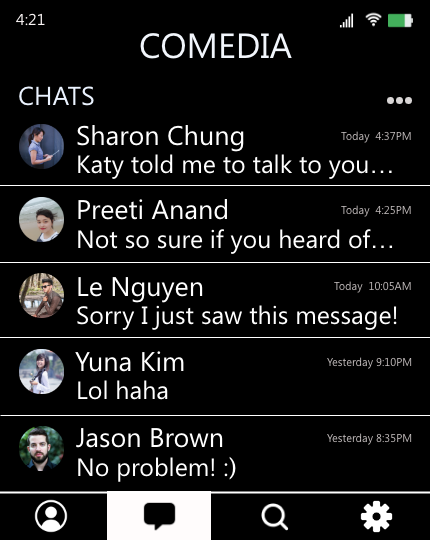

Imagine Sannvi has been receiving many unwanted messages on CoMedia. The messages often include compliments about her looks, which she finds uncomfortable. Sannvi decides she does not want to see such messages and goes to “Control Panel,” applying network-centric rules such as: Only allow people that my friends have messaged to message me. Now, if a stranger messages Sannvi on CoMedia, the system first looks up whether the sender has ever interacted with Sannvi or any of her friends on the platform. If not, CoMedia sends the stranger’s message to a separate queue which Sannvi can later review if she wants.